Noah Webster Meets AI

How Dictionaries Became Our Enemy

In 1828, Noah Webster finished a project he had been working on for over two decades. Before writing a single definition, he spent ten years comparing words across twenty languages. His goal was precise: give a self-governing people a common language with settled definitions, so they could read, reason, and govern themselves — without needing anyone else to interpret the world for them.

That goal describes a condition the United States no longer reliably produces. The gap between Webster’s standard and the 2026 record is not the result of drift. It has identifiable causes — and identifiable villains.

The Numbers Don’t Lie

As of 2023, 28 percent of American adults scored at or below Level 1 in literacy — meaning they struggle to read anything beyond short, simple sentences. Roughly one in five adults is considered functionally illiterate. Forty-four percent of American adults do not read a single book in a year.

Among children, the picture is equally stark. Two-thirds of fourth graders in America cannot read proficiently. The declines predate the pandemic. They have continued through it.

This is not an accident of history. It is the output of decisions made by institutions, for institutional reasons…, and financial profit.

The Credentialing Apparatus and the Methods It Defended

The institutions responsible for training teachers — university schools of education, their accrediting bodies, and the commercial publishers supplying their curricula — built their professional identity and revenue around whole-language and balanced literacy frameworks. These frameworks treated reading as a process of guessing meaning from pictures, context, and story pattern rather than decoding the words on the page.

The apparatus did not merely resist a better method — it actively produced worse ones, credentialed them, and distributed them at national scale. Whole-language and balanced literacy frameworks were not placeholders waiting to be replaced. They were the product. Generations of teachers trained inside that system entered classrooms equipped to teach guessing, not reading — and had no professional framework that said otherwise.

The Science of Reading Makes Things Worse

Phonics instruction — teaching children to connect letters to sounds — was already in use before the Science of Reading (SoR) became the reform movement’s banner. Crediting SoR with introducing phonics assigns it an importance the record doesn’t support. Phonics is not SoR’s contribution. It is what SoR rode in on.

What SoR actually introduces is a comprehension model built on a damaging premise: that proper use of a standard dictionary kills comprehension. Students are taught to infer word meaning from surrounding context rather than consult a settled definition. Looking up a word is treated as an interruption — a thing that breaks the reading process rather than grounds it.

Webster spent ten years building the standard those definitions live in. SoR instructs students not to use it. That is not a partial fix with a side effect. It is an attack on the foundation of Webster’s ideal scene dressed as a reading reform.

The Attention Economy Found a Weakened Population

Platform companies did not create the literacy gap. They built products that are more profitable when users consume short-form content than when they read deeply. A population of weaker readers — trained to guess at meaning rather than look it up, and habituated to passive consumption — is a population easier to keep on a scroll.

Webster’s project required citizens capable of sustained engagement with long, difficult text. He spent years doing comparative research before drawing a single conclusion. The short-form content environment does not require or reward that capacity — and the longer the literacy crisis runs, the more naturally that environment fills the space reading used to occupy.

Language Itself Has Become a Contested Resource

Webster never objected to specialized vocabulary. Scientists, lawyers, engineers, and tradespeople have always developed precise technical language for their domains — that is a sign of a healthy intellectual culture, not a threat to it. A cardiologist’s terminology belongs to cardiology. A programmer’s argot belongs to programming. Webster celebrated that kind of precision.

What he was building was different: the civic lexicon. The words through which a people governs itself. Constitution. Court. Congress. Law. Citizen. These are not domain terms belonging to any specialty. They are the shared operating language of self-governance — the words every American needs to mean the same thing at the same time for the republic to function.

That lexicon is now under active contest. Ideological networks on both ends of the political spectrum have worked to redefine core civic terms — not to clarify them, but to capture them. When the meaning of woman, equity, misinformation, or public health emergency shifts based on who holds institutional authority, the result is not a richer vocabulary. It is a civic language that no longer has a settled floor. At the same time, executive action has stripped established terms from federal agencies — not replacing them with clearer ones, but removing the shared reference point entirely.

Both movements are doing the same thing from opposite directions: asserting that civic definitions belong to whoever holds power, not to a literate people capable of reading the primary sources and deciding for themselves.

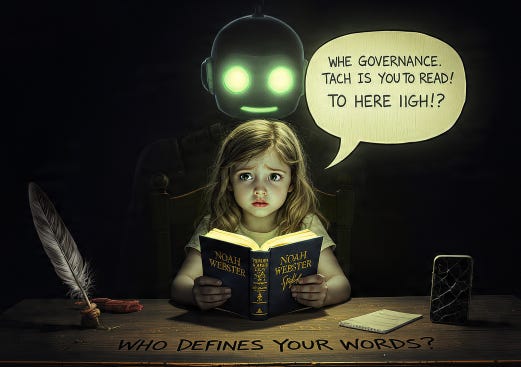

That contest is only winnable against a population that cannot go to the source. A people that reads, looks things up, and consults the settled standard is a people that can say: that is not what this word means. A people that guesses at meaning from context — or outsources the question to a machine — cannot.

AI Completes the Picture

Publicly accessible AI does not merely distract from reading. It offers to make reading and writing unnecessary. It produces fluent text without the understanding that producing text is supposed to develop. It answers questions without requiring the questioner to have engaged with the record.

Webster identified authorship as the chief glory of a nation. He meant something specific: a people capable of producing written argument from their own understanding, on their own authority — not a summary of what a machine decided they probably meant to say. A people that increasingly outsources that capacity is not producing authors. It is producing users.

The AI question is not resolved. But it lands on a population already weakened by decades of preventable literacy failure, trained by platforms toward short-form consumption, and handed a tool that offers to make the remaining effort unnecessary.

The Pattern and Who Benefits

Each of these forces has its own origin and its own beneficiaries. None required coordination to produce the same outcome.

The education credentialing apparatus defended its methods because its professional existence depended on them. The Science of Reading movement replaced one flawed framework with another while piling undue importance on phonics — a method already in use — and embedding a comprehension model that treats Webster’s dictionary as an obstacle. Platform companies built what their revenue model required. Ideological networks pursued definitional control through the available channels. AI developers built what the technology made possible and the market rewarded.

What they share: each benefits from a population that accepts mediated interpretation rather than reads, reasons, and decides independently. The less capable the reader — and the more hostile the pedagogy (the method and practice of teaching) to the settled standard — the more necessary the mediator.

What Resolution Actually Requires

The Science of Reading is not the answer to this problem. It is part of it. A reform that piles importance on phonics while teaching students that consulting a dictionary interrupts comprehension does not restore Webster’s Ideal Scene. It replaces one attack on it with another.

Real resolution requires returning control of teacher preparation to accountable bodies that operate on the actual evidence — free from the credentialing apparatus that has defended failed methods for decades. It requires a comprehension model that treats the settled standard — the dictionary — as the destination of reading, not an interruption to it. It requires platform design accountability that treats sustained-reading displacement as a measurable public harm. And it requires defining AI’s role in civic authorship before the question of what constitutes a human author becomes unanswerable in practice.

None of this is underway at the scale the gap requires. The Ideal Scene — a literate people that can accept or dismiss those who claim to speak for it, and pass that standard forward — remains open.

References

[1]. Wikipedia — Literacy in the United States (April 2026)

[2]. Prosperity for America — US Literacy Rate 2025 – Updated Statistics & Data (September 2025)

[3]. National University — 49 Adult Literacy Statistics and Facts for 2026 (June 2025)

[4]. Pennington Publishing — Context Clues in Reading and Writing (October 2024)

[5]. Atlantis Press — Using Context Clues Strategy Toward Students’ Reading Comprehension (2022)